I research machine learning and neuroscience at Meta. During my PhD at Columbia University I analyzed electrical activity in the brain to reverse engineer the algorithms supporting complex behavior.

Here are my scientific publications, and

here are summaries of select projects:

Neuroscientists use calcium imaging to monitor the activity of large populations of neurons in awake, behaving animals (like in this beautiful example). However, calcium imaging can be very noisy, making neuron identification challenging. I use a two-stage convolutional neural network approach to find neurons in noisy data. I’m collaborating with Eftychios Pnevmatikakis at the Simon’s Foundation to see if this approach combined with standard matrix factorization techniques outperforms current calcium imaging segmentation algorithms.

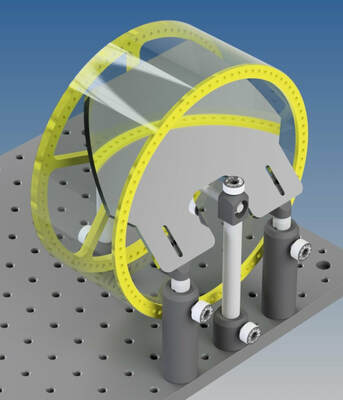

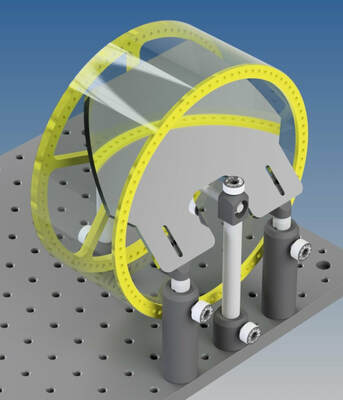

While the neural control of some simple types of movement is well understood, how the brain coordinates complex, whole-body behavior remains a mystery. For my PhD research I developed a closed loop system in which mice run on top of a wheel and skillfully leap over motorized hurdles while I record from their brains. I developed a custom linear motion system (shown to the left) along with custom microcontroller software that moves hurdles towards mice at the same speed that mice are running, simulating what it is like to jump over stationary objects. Using my open source motion tracking system I then relate the 3D movements of mice to the activity of neurons that control these movements.

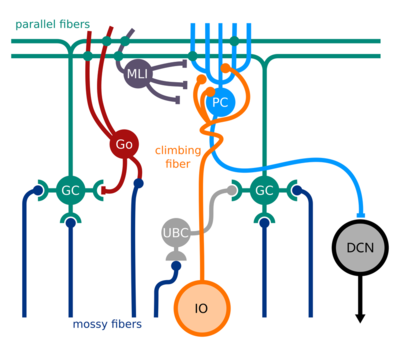

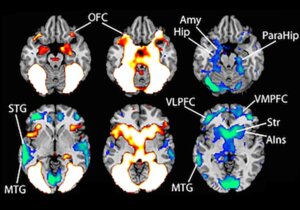

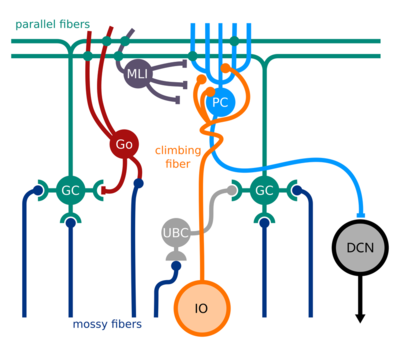

With neuroscientists working on topics as diverse as the eyes of flies, the guts of mice, and the neural basis of free will, it’s easy to get the impression that the field is engaged in what Ernest Rutherford (probably, and derisively) called “stamp collecting” - amassing facts about the world without uncovering general principles from which deeper understanding emerges. A nice counter-example comes from Nate Sawtell’s work on electric fish. In an incredible case of convergent evolution, a brain region that helps a strange fish detect electricity bears remarkable similarity to the cerebellum, a motor control brain region in mammals. In this review Nate Sawtell and I argue that both brain regions implement the same underlying algorithm, and we show how it can be leveraged both for sensory processing and the control of movement.

New tools keep popping up that allow neuroscientists to record from greater numbers of neurons in the brain. For those of us who wish to understand how the brain controls behavior, we need to make sure our measurements of behavior keep pace with our measurements of neural activity. I designed an open source running wheel for mice that facilitates 3D reconstruction of body pose. This wheel is currently being using by labs around the world. With a single camera, access to a laser cutter, and a small number of parts you can achieve kinematic analyses that previously required thousands of dollars worth of cameras and software.

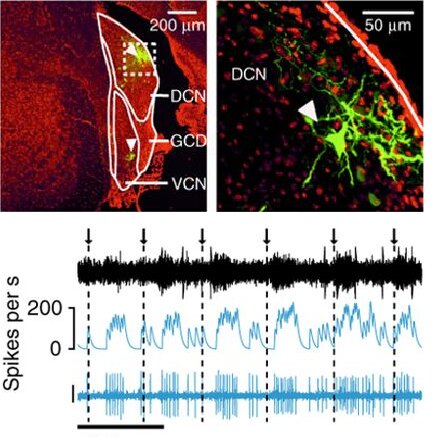

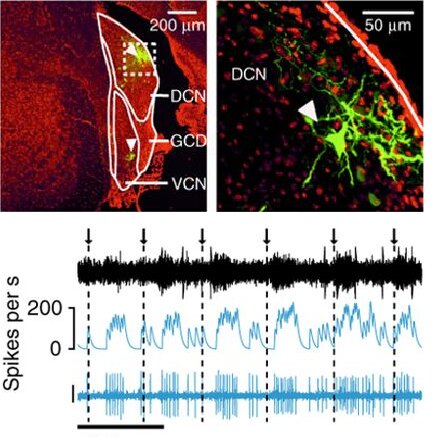

Many neuroscientists fall into one of two camps: those who figure out how the brain controls the body, and those who figure out how the brain processes sensory information. However, some of the coolest stuff occurs at the intersection between sensation and action. For example, how does the brain distinguish sensations generated by our own actions (like the sound of our footsteps) from those caused by things in the world (like the sound of a predator sneaking up to eat you!)? In Nature Neuroscience, we show how a little brain region in mammals tunes out the sounds of animals’ own behavior, potentially enhancing their ability to detect critical sounds from the outside world.”

Some neuroscientists study sensation, and some study action. However, most animal behavior lies in the murky area between these extremes. The whisker movements mice use to sense their environment illustrate how perception and action are inextricably linked. Chris Rodgers studies such ‘active sensation’ by recording the brains of mice while they explore objects with their whiskers. I worked with Chris to build a convolutional neural network that measures the movements of individual whiskers with high fidelity. This approach avoids the heavy handed feature engineering of previous algorithms and also makes for some really cool videos!

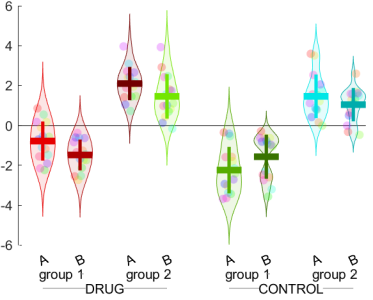

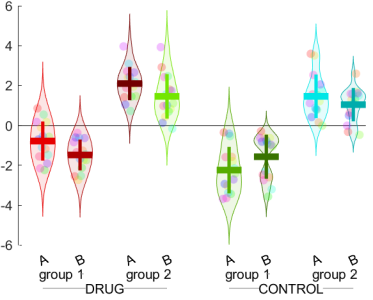

Just because your data are terrible doesn’t mean they can’t look great! I often analyze data with complex hierarchical structures. Making nice bar plots that capture relationships in multi-factorial designs can require annoying nested for loops that are difficult to modify. I developed barFancy, a Matlab function that requires only a single tensor as input. It produces pretty bar and/or violin plots with tons of visualization options.

Scientists often need to generate temporally precise electrical signals to control devices around the lab (lasers, cameras, current generators, etc). Most signal generators are big, expensive, and difficult to operate. I designed an easy-to-use, open source signal generator - SignalBuddy - that can be made for ~$20 in parts. SignalBuddy has a nice 3D printed enclosure and replaces expensive signal generators in many lab applications.